RTSP Server Setup and Pipeline Examples

Summary

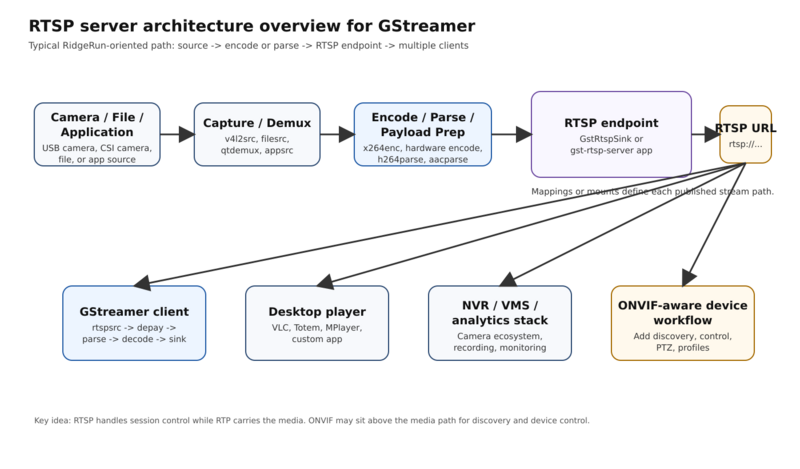

A GStreamer RTSP server publishes encoded media at an rtsp:// URL so clients can connect, negotiate transport, and receive RTP packets. In practice, the two most useful paths are the upstream gst-rtsp-server library for custom applications and GstRtspSink when you want a pipeline-native RTSP server element.

To enable RTSP with GStreamer, build a pipeline that produces encoded media, publish it through an RTSP server layer, and verify the resulting rtsp:// URL with a simple client. For fast command-line workflows RidgeRun's GstRtspSink is the most direct path, while gst-rtsp-server is the upstream choice when you want a custom application or a Python server.

Key takeaways

- Use GstRtspSink for fast command-line validation and pipeline-native integration.

- Use

gst-rtsp-serverwhen you need application-level control over mounts, authentication, and lifecycle behavior. - Set the port and mount path explicitly during development to avoid issues.

- Validate the published URL with both a simple client and an explicit

rtspsrcpipeline.

GStreamer RTSP Streaming/RTSP Server Setup and Pipeline Examples

The basic GStreamer RTSP server pipeline is: source -> encode or parse -> RTSP server endpoint -> rtsp://host:port/path. If you want the smallest working example from the command line, use GstRtspSink; if you want an application-level server in Python or C, use the upstream gst-rtsp-server library.

Before you start

This page assumes you already have a working local GStreamer installation, an encoder that matches your target format, and a way to inspect installed elements with gst-inspect-1.0.

The most common setup mistake is assuming every RTSP implementation uses the same default port.

| Implementation | Default port behavior | Practical recommendation |

|---|---|---|

gst-rtsp-server |

Common development default is 8554 |

Use 8554 for local testing and examples

|

| GstRtspSink | Defaults to RTSP service port (554) if service is not overridden |

Explicitly set service=8554 or another unprivileged port

|

Also keep this distinction clear:

rtspsrcis a client-side source element used for playback.rtspclientsinkpublishes to an RTSP server.- GstRtspSink hosts RTSP streams directly with the

rtspsinksink element. gst-rtsp-serveris the upstream application library for building servers.

Two ways to serve RTSP with GStreamer

Use the upstream gst-rtsp-server library when

Choose the upstream library when the RTSP server is part of a larger application and you need full control over mounts, authentication policies, object lifetimes, integration with your event loop, or dynamic pipeline creation.

This is the better path for:

- Products that need custom business logic around sessions or mounts.

Use GstRtspSink when

Choose GstRtspSink when you want to expose one or more RTSP URLs directly from a GStreamer pipeline without writing a separate RTSP server application.

This is the better path for:

- Fast prototyping with

gst-launch-1.0. - Embedded products that already revolve around pipeline composition.

- Multi-stream and multi-mapping RTSP serving from one pipeline.

- Teams that want a commercial plugin with RidgeRun support options.

How to enable an RTSP stream

The mechanics are simple even if the element names vary by project:

- Capture or read media from a source such as

v4l2src,filesrc, or an application source. - Encode the media or, if it is already encoded, parse it into the correct format.

- Expose it through an RTSP server layer.

- Publish a mapping or mount such as

/camera,/stream1, or/movie. - Test the resulting URL with

playbin,rtspsrc, or a known external client.

A minimal RTSP streaming pipeline looks like this:

v4l2src / filesrc / appsrc

|

encode or parse

|

RTSP serving layer

|

rtsp://host:port/mapping

If you forget to assign the correct mapping or mount, clients will often fail with “not found”.

Basic GstRtspSink pipeline

The following command is the quickest way to create a visible RTSP endpoint from the command line:

gst-launch-1.0 videotestsrc is-live=true ! x264enc tune=zerolatency speed-preset=ultrafast key-int-max=30 bitrate=4000 ! h264parse config-interval=-1 ! video/x-h264,mapping=/stream1 ! rtspsink service=8554

This is the expected command line output for the previous command (tested on x86 with GStreamer 1.24.2):

Setting pipeline to PAUSED ... Pipeline is live and does not need PREROLL ... Pipeline is PREROLLED ... Setting pipeline to PLAYING ... New clock: GstSystemClock Redistribute latency... Redistribute latency... Redistribute latency... Redistribute latency... 00:19.7 / 99:99:99.

You can validate it immediately with:

gst-launch-1.0 playbin uri=rtsp://127.0.0.1:8554/stream1

This is the expected command line output (tested on x86 with GStreamer 1.24.2):

Setting pipeline to PAUSED ... Pipeline is live and does not need PREROLL ... Progress: (open) Opening Stream Pipeline is PREROLLED ... Prerolled, waiting for progress to finish... Progress: (connect) Connecting to rtsp://127.0.0.1:8554/stream1 Progress: (open) Retrieving server options Progress: (open) Retrieving media info Progress: (request) SETUP stream 0 Progress: (open) Opened Stream Setting pipeline to PLAYING ... New clock: GstSystemClock Progress: (request) Sending PLAY request Redistribute latency... Progress: (request) Sending PLAY request Redistribute latency... Progress: (request) Sent PLAY request Redistribute latency... Redistribute latency... Redistribute latency... Redistribute latency... Redistribute latency... Redistribute latency... 0:00:01.6 / 99:99:99.

This example deliberately sets service=8554 so it can run as a normal user during development.

How can I use GStreamer to stream RTSP video from a USB camera?

USB camera that outputs raw video

If the USB camera outputs raw frames, encode them before publishing:

gst-launch-1.0 v4l2src device=/dev/video0 ! videoconvert ! video/x-raw,framerate=30/1 ! x264enc tune=zerolatency speed-preset=ultrafast key-int-max=30 bitrate=4000 ! h264parse config-interval=-1 ! video/x-h264,mapping=/usb ! rtspsink service=8554

This is the expected terminal output on successful execution (tested on x86 with GStreamer 1.24.2):

Setting pipeline to PAUSED ... Pipeline is live and does not need PREROLL ... Pipeline is PREROLLED ... Setting pipeline to PLAYING ... New clock: GstSystemClock Redistribute latency... Redistribute latency... Redistribute latency... Redistribute latency... 0:00:23.4 / 99:99:99.

and playbin can be used to validate the streaming as follows:

gst-launch-1.0 playbin uri=rtsp://127.0.0.1:8554/usb

USB camera that already outputs H.264

If the camera already provides H.264, skip the software encode and just parse it:

gst-launch-1.0 v4l2src device=/dev/video0 ! video/x-h264,framerate=30/1 ! h264parse config-interval=-1 ! video/x-h264,mapping=/usb ! rtspsink service=8554

This option usually lowers CPU load and is the better baseline for embedded or thermally constrained devices.

Practical notes

- Replace

/dev/video0with the correct camera node. - Confirm the actual camera caps with

v4l2-ctl --list-formats-extorgst-device-monitor-1.0. - For Embedded SoC CSI cameras, use the correct camera source element for the platform rather than

v4l2src, for instance nvarguscamerasrc for NVIDIA Jetson or qtiqmmfsrc for Qualcomm. - For hardware-accelerated targets, move to the native encoder as soon as the end-to-end path works.

How to stream a local video file using GStreamer over RTSP

There are two common ways to serve a local file over RTSP:

- Restream an already encoded file by demuxing and parsing the tracks.

- Decode and re-encode the file if the original codecs are not suitable for your RTSP target path.

When the input file already contains H.264 video and AAC audio, this direct-restream pattern is efficient and simple:

gst-launch-1.0 rtspsink name=sink service=8554 appsink0::sync=true appsink1::sync=true qtdemux name=demux filesrc location=input.mp4 ! demux. demux. ! queue ! aacparse ! audio/mpeg,mapping=/movie ! sink. demux. ! queue ! h264parse ! video/x-h264,mapping=/movie ! sink.

If your source file is not already in a friendly codec set for RTSP, transcode the file before it reaches the rtspsink stage.

Client validation:

gst-launch-1.0 rtspsrc location=rtsp://127.0.0.1:8554/movie name=src src. ! rtph264depay ! h264parse ! avdec_h264 ! queue ! autovideosink src. ! rtpmp4adepay ! aacparse ! avdec_aac ! queue ! autoaudiosink

Basic GStreamer RTSP server Python example

The following Python example uses the upstream gst-rtsp-server bindings. It exemplifies the minimum requirements: create a server, add a mount point, define a launch string, attach, and run the GLib main loop.

#!/usr/bin/env python3

import gi

gi.require_version("Gst", "1.0")

gi.require_version("GstRtspServer", "1.0")

from gi.repository import Gst, GLib, GstRtspServer

Gst.init(None)

server = GstRtspServer.RTSPServer()

server.set_service("8554")

factory = GstRtspServer.RTSPMediaFactory()

factory.set_shared(True)

factory.set_launch(

"( "

"v4l2src device=/dev/video0 ! videoconvert ! "

"x264enc tune=zerolatency speed-preset=ultrafast key-int-max=30 bitrate=4000 ! "

"rtph264pay name=pay0 pt=96 config-interval=1 "

")"

)

mounts = server.get_mount_points()

mounts.add_factory("/camera", factory)

server.attach(None)

print("RTSP stream ready at rtsp://127.0.0.1:8554/camera")

GLib.MainLoop().run()

Important notes:

- The launch string must produce one or more RTP payloaders named

pay0,pay1, and so on. - Replace

v4l2srcwithvideotestsrc is-live=trueif you want a camera-free smoke test. - The same pattern works for file streaming by replacing the source portion of the launch string.

Low-latency server tuning

Ultra-low-latency RTSP starts with the source and encoder, not only the network.

Recommended first steps:

- Keep the pipeline live and avoid unnecessary buffering.

- Use hardware capture and hardware encode where possible.

- Prefer direct parse-and-restream paths when the source is already encoded correctly.

- Keep GOP length short enough for fast join and recovery.

- Avoid unnecessary colorspace conversions and copies.

- Validate that queue sizes are not hiding latency.

- Reduce client-side latency once the server pipeline is stable.

For RidgeRun-based optimization work, the most relevant supporting pages are Embedded GStreamer Performance Tuning, GStreamer Encoding Latency in NVIDIA Jetson Platforms, GstRtspSink - NVIDIA Jetson, and GstRTPNetCC.

Frequently asked questions

- How do I set up a basic GStreamer RTSP server pipeline?

- Build a pipeline that produces encoded media, expose it through an RTSP server layer such as

gst-rtsp-serveror GstRtspSink, and then test the resultingrtsp://URL with a client. - How do I enable RTSP streaming from a USB camera?

- Use the correct camera source, encode or parse the camera output into a supported codec such as H.264, and publish it through a server endpoint with a known mapping like

/usb. - Can I stream a local video file over RTSP?

- Yes. If the file is already encoded with RTSP-friendly codecs, demux and parse it; otherwise decode and re-encode before the RTSP-serving stage.

- Should I start with GstRtspSink or gst-rtsp-server?

- Start with GstRtspSink when pipeline-first fast prototyping matters, and start with

gst-rtsp-serverwhen the RTSP server must be deeply integrated into your application. - What is the simplest validation client?

playbinis the fastest smoke test, while explicitrtspsrcpipelines are better for debugging and low-latency work.- Which path is better for a product:

gst-rtsp-serverorrtspsink? - Use

gst-rtsp-serverwhen you need application-level control. Use GstRtspSink when you want a pipeline-native server and a faster time to a working RTSP endpoint. - What is the first client command I should try?

- Start with

gst-launch-1.0 playbin uri=rtsp://127.0.0.1:8554/stream1because it validates the URL with the fewest moving parts. - Can I serve multiple streams from one pipeline?

- Yes. That is a common use case for GstRtspSink, provided the pipeline, mappings, and hardware resources are designed for it.

Related RidgeRun pages

- GstRtspSink

- GstRtspSink_-_Basic_usage

- GstRtspSink_-_Simple_Examples

- GstRtspSink_-_Live_File_Streaming

- GstRtspSink_-_Multicast

- GstRtspSink_-_RTSP_over_HTTP_Tunneling

- GstRtspSink_-_Basic_Authentication

- GstRtspSink_-_Performance

- Embedded_GStreamer_Performance_Tuning

- GStreamer_Encoding_Latency_in_NVIDIA_Jetson_Platforms

- GstRTPNetCC

- GStreamer RTSP Streaming/RTSP Client Playback and Troubleshooting

- GStreamer RTSP Streaming/Commercial RTSP Solutions and Low-Latency Services

References